Matt Hodgkinson, Research Integrity Manager at the UK Research Integrity Office, gives us an overview on how AI tools are shaping the future of publishing, and how reviewers, authors and publishers can adapt to thrive in this changing landscape.

The emergence of artificial intelligence (AI) technology over the last two decades is changing the way things are done in nearly every field, and academic publishing is no exception.

Traditionally, the publication process relied heavily on human intervention, involving researchers sharing their work through journals, peer review conducted by experts in the field, and manual indexing and retrieval of scholarly content. However, AI has propelled a paradigm shift by automating and augmenting various aspects of scholarly communication.

One of the most pivotal areas is peer review, where AI is transforming the way that research is evaluated. While it is a crucial step in ensuring the integrity of scientific research, the peer review process can be time-consuming and often relies on the availability and proficiency of reviewers. AI-powered tools have the potential to streamline the peer review process, reduce bottlenecks in the process and overall improve accuracy.

We speak with Matt Hodgkinson, Research Integrity Manager at the UK Research Integrity Office (UKRIO), on how AI tools are shaping the future of publishing, and how reviewers, authors and publishers can adapt to thrive in this changing landscape.

How is AI influencing your role (for better or for worse)?

We held off from commenting on generative AI at UKRIO until the initial hype had settled down. During that time, some clear consensus emerged – don’t list AI tools as authors, peer reviewers shouldn’t use them, and any use should be declared and carefully checked by the authors. I can’t pretend to be an expert on the technical side of AI, but there’s a lot of interest in how AI should be used, monitored, and regulated in academia and research and this is something we can advise on. In July, I wrote a piece for UKRIO on the use of AI tools in research and the following month I was part of an FSCI 2023 course about AI ethics and recorded a talk about AI and research integrity for UKRIO’s subscribers. No doubt this need for guidance will continue.

What are your thoughts on using chatbots in academia (and with peer review in mind)?

I was quite excited about generative AI late last year – I played with BLOOM and Stable Diffusion. However, the shine wore off quickly because I found the writing style bland and I couldn’t see a need for these tools in my work. The willingness of some academics to use unedited chatbot output in opinion pieces and to list it as an author is disappointing but predictable because new tech trends always turn heads. Some researchers have naively trusted Large Language Models (LLMs), but these tools too often produce false content. I don’t think they’re suitable for generating de novo content about scholarship – they are no substitute for reading the latest literature. LLMs as they stand now have no legitimate role in peer review because they are not validated for the critical appraisal of scholarly work and there are risks of breaching confidentiality. There are other promising automated tools specifically designed to check reporting and statistics. Where LLMs do have a place is in reworking existing content, such as turning bullet points into prose or making writing more formal, and this is particularly useful for non-native speakers.

What might keep publishers awake at night when it comes to AI and AI tools?

There are two sides to the misuse of tools – malice and incompetence. Malicious use will come from paper mills, which are already producing fake content. LLMs greatly reduce the barrier to producing convincing fraud, because the phrasing is new each time, the language is fluent, and the content is produced within seconds. Generative AI might also be used to produce images that can evade algorithms designed to spot image manipulation. Incompetent use is also a problem, with users expecting that LLMs can analyse information, accurately summarise documents, do a literature review, or suggest references. The LLM will confidently assert that it has done what it was asked to, but it is just a sophisticated guessing tool and doesn’t have any real analytical ability.

You can read part 1 of this blog post here.

Interested in finding out more about reviewing for us? Visit our website.

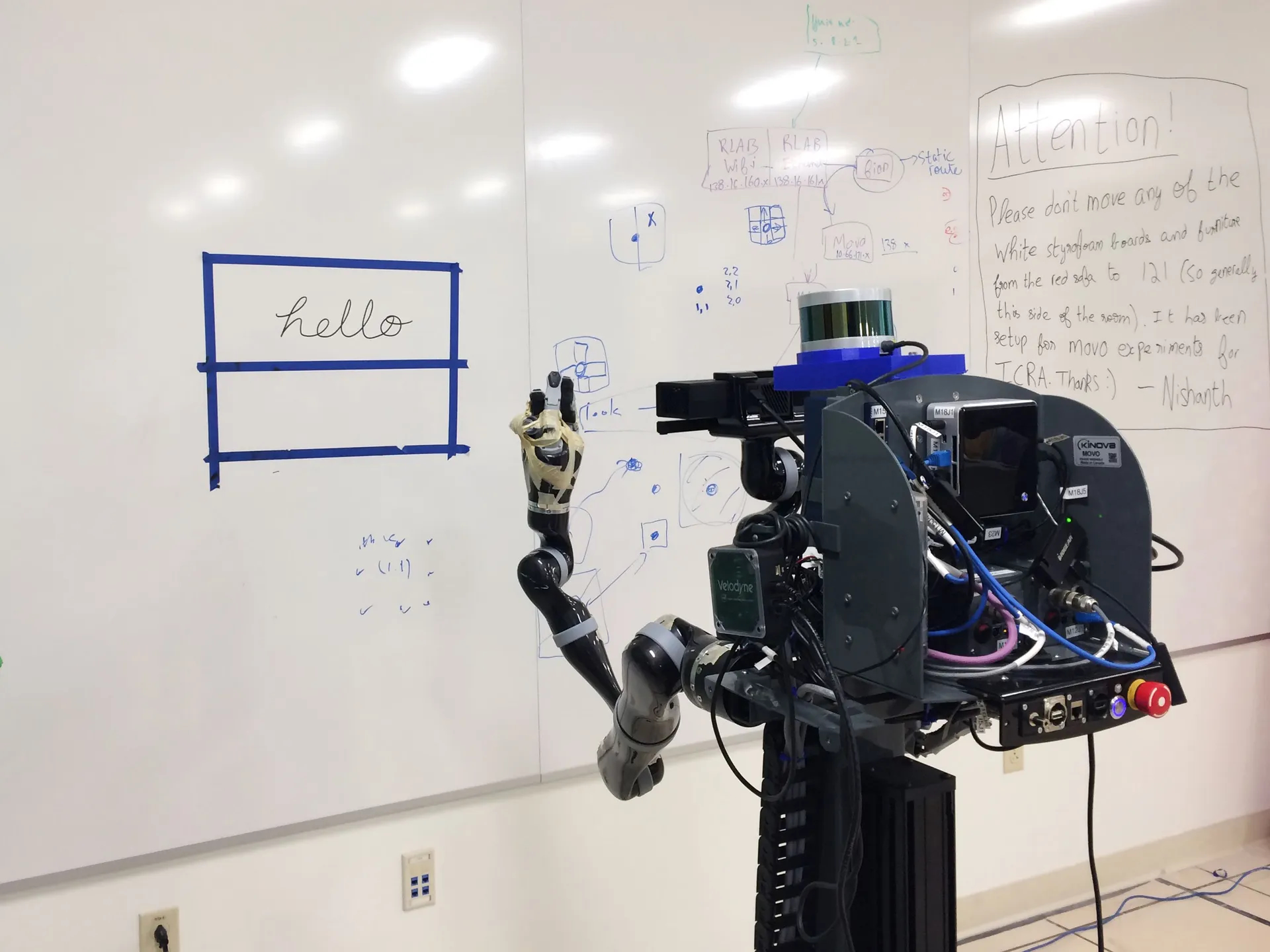

Image: Robot writing, credit: Atsunobu Kotani.